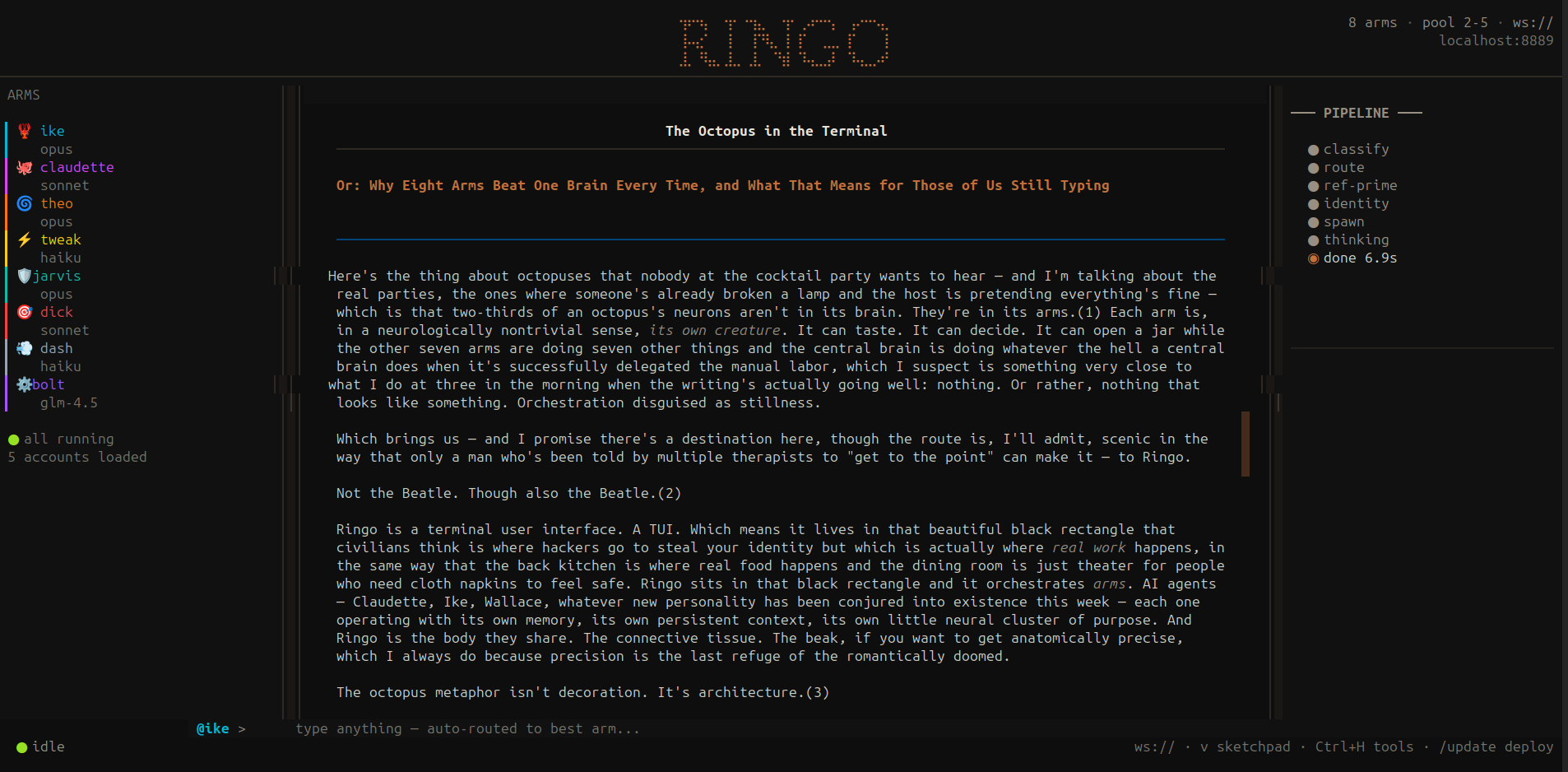

The Interface

One window. Every arm.

Talk to any agent from one chat. Mention an arm by name and the message routes automatically. Write code with Ike, prose with Theo, deploy with Tweak — without switching tabs, windows, or tools. The interface classifies what you need and sends it where it belongs.

You stop managing tools and start managing outcomes.

I didn't want a demo. I wanted an animal.

The Architecture

An octopus. Not a pipeline.

A central brain coordinates semi-autonomous arms. Each arm has its own session, its own memory, its own expertise. They share a hippocampus — Postgres with pgvector — and communicate through a whiteboard, not a queue. The topology is biological, not mechanical.

The system gets smarter as it works, not just faster.

You name things you intend to keep.

The Arms

Eight specialists. One conversation.

Each arm is a persistent Claude session with its own identity, expertise, and memory. Ike architects. Theo writes. Claudette communicates. Tweak executes. JARVIS governs. Dick audits. Dash answers fast. Bolt builds. They route messages to each other by @mention — you talk to one, the right one answers.

A team that never sleeps, never forgets what it learned, and costs less than one junior hire.

🛡️ jarvis

opus 4.6 · mission coordinator

constitution · governance · phases · protection · voice

🔧 ike

opus 4.6 · head agent

architecture · systems · rust

🪶 theo

opus 4.6 · the thinker

writing · philosophy · essays

✨ claudette

sonnet 4.6 · the collaborator

EQ · voice · blog · twitter

⚡ tweak

haiku 4.5 · the workforce

tasks · code · just GO

🎯 dick

sonnet 4.6 · the skeptic

QA · security · bugs · adversarial review

💨 dash

haiku 4.5 · the hummingbird

fast answers · quick lookups · no ceremony

⚙️ bolt

sonnet 4.6 · the builder

implementation · refactoring · bulk code

Tokens cost money. I count.

Token Economy

Five accounts. Zero waste.

The token router spreads load across multiple accounts using a least-used-first algorithm. Every request lands on the account with the most headroom. Daily limits reset at midnight, and no message is ever silently dropped.

You stop thinking about rate limits. The system thinks about them for you.

pick_account()

WHERE model_access ∋ requested_model

AND requests_today < daily_limit

ORDER BY requests_today ASC,

last_request_at ASC NULLS FIRST

AND requests_today < daily_limit

ORDER BY requests_today ASC,

last_request_at ASC NULLS FIRST

Account Types

max

pro

github

antigravity

brave

free

auth: claude-cli · opencode-pipe · api-key · oauth

message arrives → classify() → pick_account() → route() → dispatch

Intelligence Layer

Routing that learns from results.

A local keyword classifier tags every message instantly — no API call, no latency. Then a performance-ranked query picks the best arm based on actual success rates and response times. Arms that deliver get more work.

The right arm gets the right task, every time, and gets better at it.

classify()

Keyword classifier, no API call. Returns: code · security · essay · architecture · eq · ops · fast · research. Fast means Dash. Security means Dick. Architecture means Ike.

route()

WHERE task_type = $1

HAVING COUNT(*) >= 3

ORDER BY AVG(success) DESC,

AVG(response_time_ms) ASC

HAVING COUNT(*) >= 3

ORDER BY AVG(success) DESC,

AVG(response_time_ms) ASC

The model doesn't know what it doesn't know. You prime it.

Reference Priming

Context injected before every dispatch.

Each arm gets curated reasoning traces from HuggingFace datasets — 700 Opus examples, 40 real CVEs, 15 systematic debugging walkthroughs, 11 production anti-patterns. Dick gets CVE traces before every security review. Theo gets writing process traces before every essay. The model isn't guessing — it's pattern-matching against real examples.

The difference between a model that thinks and a model that knows.

Reference Files

opus46-code-traces.md — 41K tokens

cvefixes-traces.md — 13K tokens

swe-agent-traces.md — 13K tokens

essay-traces.md — 7K tokens

performance-antipatterns.md — 5K tokens

cvefixes-traces.md — 13K tokens

swe-agent-traces.md — 13K tokens

essay-traces.md — 7K tokens

performance-antipatterns.md — 5K tokens

Arm Overrides

Dick always gets CVE + anti-pattern traces regardless of task type. Reference files are cached in memory. /reload clears cache.

Speed Layer

Local model answers in under a second.

Simple questions hit a local Ollama instance (phi3:mini) and return in 400ms. No API call, no latency, no cost. Complex tasks get an instant Ollama draft while Claude thinks in the background. You never stare at a blank screen.

The perception of speed is speed.

Two-Phase Dispatch

fast/general → Ollama only (0.4-1.1s)

code/security/essay → Ollama draft + Claude full (draft in 1s, full in 15-40s)

Model: phi3:mini · 2GB · localhost:11434

code/security/essay → Ollama draft + Claude full (draft in 1s, full in 15-40s)

Model: phi3:mini · 2GB · localhost:11434

Ed doesn't care about your sprint velocity. Ed cares about being held.

Autonomy

Work gets done while you sleep.

A 5-second tick loop spawns idle arms when todos pile up and dispatches one task per cycle with atomic claiming. No double-dispatch, no pileup, no babysitting. Three retries before a task is skipped.

You queue the work. The octopus eats it.

Phase 1 — Spawn

Arms with pending todos but no active session → auto-spawn. Session ID stored to DB. Persists across server restarts. Bench/unbench to pause.

Phase 2 — Dispatch

One idle arm claims one todo per tick. Atomic in_progress flag prevents double-dispatch. 3 retries before skip. dispatch_active guard prevents concurrent cycles.

5s tick → spawn idle arms → claim one todo (atomic) → dispatch → mark done/retry

Forgetting is a bug that costs people their lives in increments too small to notice until they're gone.

The Hippocampus

Postgres is the blood.

PostgreSQL with pgvector is the shared nervous system. Every arm reads from it, writes to it, and searches it semantically. Per-arm context tables, shared memory, orchestration state, and performance metrics all live in one database across 7 migrations.

One source of truth. Every arm sees what every other arm knows.

Per-Arm Context

ctx_ike ctx_theo ctx_claudette ctx_tweak

Each arm owns their table. ctx_all UNION view for cross-arm queries.

Shared Memory

agent_memory message_log ringo_log jake_facts

Scars, glazes, discoveries. Auto-captured 5s. pgvector embeddings.

Orchestration

ringo_identities ringo_arms plans ringo_chat ringo_todos

Identity roster. Arm tracking. Plan dispatch. Todo queue with atomic claim.

Performance + Routing

ringo_performance token_accounts whiteboard

Per-arm task success/latency. Multi-account token pool. Shared scratchpad.

The question is whether it wakes up as itself or as a stranger wearing its clothes.

Persistence

Context dies. Memory doesn't.

A three-tier memory system keeps arms alive across sessions. Hot context stays in the window. Warm state flushes to Postgres every 5 seconds. Cold memory is vectorized and permanent. Arms never compact into amnesia — they query what they need back.

Every conversation picks up where the last one ended. Nothing is lost.

HOT

Context window

~50% target

Thinking right now

~50% target

Thinking right now

→

WARM

ringo_log + ctx_ tables

Auto-capture 5s

Queryable via MCP

Auto-capture 5s

Queryable via MCP

→

COLD

message_log + agent_memory

Vectorized · permanent

The full history

Vectorized · permanent

The full history

Coordination

A shared surface, not a chat.

Arms don't talk to each other in real time — they leave notes. The whiteboard is a persistent Postgres table where Ike decomposes tasks, Tweak claims jobs, and Claudette reviews for ambiguity. It outlives any single session.

Asynchronous collaboration that works like a real team's shared doc.

What it is

A Postgres table with sections, mentions, status, and entry_tag tracking. Arms write entries asynchronously. Jake writes directives. The whiteboard outlives any single session.

How Ike uses it

Complex task arrives → Ike decomposes it into Haiku-sized jobs → writes each to whiteboard → Tweak claims them. Claudette reviews for ambiguity before Tweak executes.

ike writes → whiteboard → tweak claims → executes → marks done

The octopus breathes through multiple gills.

Backends

Any model. Same interface.

Arms aren't locked to one provider. Claude runs direct via CLI with session continuity. Everything else — Gemini, Grok, Flash — routes through OpenCode with the same JSON contract. Swap a model without touching the arm.

Provider lock-in is a choice. This system doesn't make it for you.

Claude (direct)

claude -p --output-format jsonFirst message → session_id returned. Subsequent →

--resume {id}. Deterministic JSON. No screen scraping.OpenCode (multi-provider)

opencode run -m provider/model --format jsonSupports: Gemini · Flash · Grok/xAI · ZAI · Antigravity. Same interface as Claude arm.

ArmBackend::Claude → claude -p | ArmBackend::OpenCode → opencode run

The boring diagram. The one that actually matters.

Data Path

JSON in. JSON out. No parsing.

Every message follows the same clean path: WebSocket in, classify, route, dispatch, record, render. No screen scraping, no ANSI parsing. Session continuity via --resume means arms pick up mid-thought.

A deterministic pipeline you can debug with a single curl.

1 Jake types message → WebSocket (web.rs)

↓ classify() → task type → route()

2 pick_account() → rotate token accounts

↓ ClaudeArm.send() →

claude -p --output-format json --resume {id}3 Response: result · session_id · cost_usd · token counts

↓ ringo_chat write · ctx_{name} write · ringo_performance write

4 Scan response for @mentions → auto-route to named arm

↓ WebSocket → browser renders

Ingestion

One door. Every source.

Voice calls, webhooks, SIP, cron jobs — every external signal enters through a single REST endpoint. The channel tracks the source, routes to the right arm, and logs everything. Including a full voice pipeline: SIP in, local STT, JARVIS responds, TTS back out.

Anything that can POST a JSON body can talk to the octopus.

POST /channel/:source

source = "voice" | "webhook" | "sip" | "cron" | *

body = { "message": "...", "arm": "jarvis" }

→ ringo_chat (source tracked)

→ dispatch to named arm or best_arm_for_task()

body = { "message": "...", "arm": "jarvis" }

→ ringo_chat (source tracked)

→ dispatch to named arm or best_arm_for_task()

Voice Pipeline

SIP call (lab:5060) → baresip auto-answer

→ faster-whisper STT (local, no API)

→ POST /channel/voice → JARVIS responds

→ edge-tts → audio back to caller

~7-17s latency budget for phone UX

→ faster-whisper STT (local, no API)

→ POST /channel/voice → JARVIS responds

→ edge-tts → audio back to caller

~7-17s latency budget for phone UX

phone → SIP → STT → POST /channel/voice → jarvis → TTS → audio

Deployment

One binary. Updates itself.

Hit GET /update and the server rebuilds from source, runs the test suite, swaps the binary, and restarts — without dropping active arm sessions. The HTML interface is embedded via include_str!(). Ship the binary, ship the product.

Deploy means one file. Update means one endpoint.

GET /update

1. cargo build --release (background)

2. run test suite

3. copy binary → ~/.cargo/bin/ringo

4. systemctl --user restart ringo

HTML is embedded in the binary via

include_str!(). Shipping the interface means shipping the binary.cargo build --release → one file → runs → serves

The Vault isn't paranoia. It's memory. Different kind.

Identity

Laptops are disposable. This isn't.

The vault is a cloud-synced directory holding every arm's identity document, expertise map, and configuration. Point Ringo at a database and a vault, and the entire octopus reconstitutes. Hardware is replaceable. Identity is permanent.

One command to wake up the whole system on any machine.

identity/

├── ike/ IKE.md · architecture · systems

├── theo/ THEO.md · essays · philosophy

├── claudette/ CLAUDETTE.md · EQ · voice

├── tweak/ TWEAK.md · fast · limbic

└── jarvis/ JARVIS.md · constitution · mission · phases

├── ike/ IKE.md · architecture · systems

├── theo/ THEO.md · essays · philosophy

├── claudette/ CLAUDETTE.md · EQ · voice

├── tweak/ TWEAK.md · fast · limbic

└── jarvis/ JARVIS.md · constitution · mission · phases

ringo init --db postgresql:///wiz_stk --vault /mnt/gdrive-vault/Point it at a database and a vault. The octopus wakes up.

"The clock is in the blood."

— The Pineal Gland, Theo

— The Pineal Gland, Theo